Why opinion surveys and voter outcomes are often polls apart

Note: This article was originally published at The Conservative Woman on Thursday 21 November 2019

The British electorate is becoming ever more wary of trusting political opinion polls. Ever since-

- the 2015 general election, when all polls predicted a hung Parliament but the voters delivered a clear Conservative majority;

- the 2016 EU Referendum, when most polls confidently predicted a Remain victory; and

- the 2017 general election, when few polls predicted the hung Parliament which resulted but differed merely on the size of the majority Theresa May was expected to gain,

a growing number of voters have treated the polling industry’s prognostications at best with an increasingly large pinch of salt, and at worst with an outright cynicism that many are biased, being intended to influence public opinion rather than merely to measure it.

And not without reason. As political academic and psephologist Matthew Goodwin of Kent University says, Brits are cautious about the current Brexit election, given that memories of 2017 are still comparatively fresh.

Why Brits are cautious about polls ahead of their “Brexit election”

Today

Conservatives =42%

Labour = 29%

**13 point lead**At same point in 2017 election (which produced hung parliament)

Conservatives 48%

Labour 30%

**18 point lead**#ge2019 #r4today— Matthew Goodwin (@GoodwinMJ) November 19, 2019

In a recent article for CapX, Number Cruncher Politics’ Matt Singh gave an informative technical explanation of why opinion polls tell such different stories, especially at election time. There’s a surprising amount of difference between both the methodologies which polling firms employ, and the statistical models which they use to interpret them; surprising in the sense that, in a competitive industry, sampling methodology hasn’t converged towards that which has been found to deliver the most accurate predictions.

But there’s also a human factor. As Singh says, when attempting to compare views and voting patterns across different periods, many people are actually quite bad at remembering how they voted on a previous occasion. Not all pollsters try to adjust for poor recall, and those which do tend to ‘weight’ respondents’ recall of their previous votes in different ways.

To that I’d be inclined to add the additional potential for error which comes from the likelihood of respondents not answering truthfully, for whatever reason. I’d also suspect that, with the far more fickle, politically-volatile, and less traditionally tribal electorate that seems to be the inevitable consequence of Britain’s developing political re-alignment, more people have genuinely not yet made up their minds about which way they would vote in three weeks’ time.

YouGov’s Anthony Wells, meanwhile, also offers an interesting perspective at UK Polling Report on the how the different methodologies used by the different polling companies affect their results. Significant variations occur in the choices given to respondents; some prompt only for the main parties, not even giving an ‘Other’ choice for minor parties, some prompt only for the parties most likely to stand in a specific constituency, and some even allow second preferences.

Moreover, what Wells terms ‘house effects’ mean that, currently, ICM, ComRes and Survation tend to show lower Conservative leads, while Deltapoll, YouGov and Opinium tend to show higher ones. What I found particularly interesting is that it’s consistently the pollsters who publish their polls timed to hit the Sunday press which report the highest leads for the Tories, while those timed for the midweek press show smaller ones.

Bearing all this in mind, it’s perhaps worth considering two of the latest polls published to see what can be gleaned from what they say. Or maybe from what they don’t say.

First, the Ipsos MORI poll on Britons’ top concerns in the run-up to the election, published on Monday 18 November, and in particular the following:

“Twenty-one per cent of the public mention the environment and pollution as a major issue for Britain – the highest recorded score since July 1990.”

So 79 per cent of the population don’t. The wisdom of crowds in evidence, perhaps. But it was the breakdown by socio-economic grouping of that 21 per cent, mentioned lower down in the press release, which looked significant to me.

It’s very noticeable how concern for ‘the environment and pollution’ – now conveniently conflated by both the Left and the Green movements to encompass both genuine, anti-pollution environmental stewardship and political ‘climate-change’ activism – as a ‘major concern’ is disproportionately concentrated among ABs socio-economically, and in the south of the country geographically.

Whether the Tories’ willingness to embrace Green-ery and so-called ‘de-carbonisation’, via higher enviro-taxes and making energy more expensive, in order to try and retain their potential LibDem defectors in Outer London, the South-East and the South-West, is going to play well among the the less-affluent working-class votes they are chasing in the Midlands and North, and whom the impact of higher Green taxes and dearer energy will disproportionately fall, is a moot point.

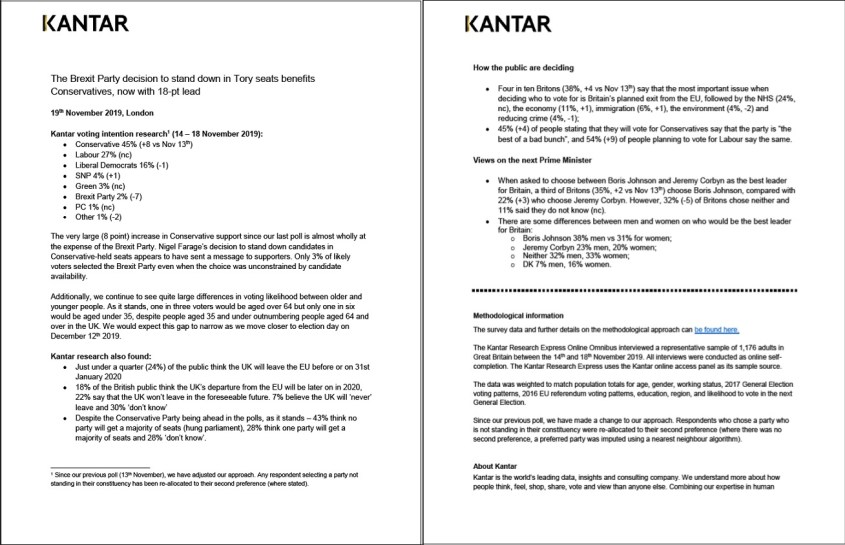

Next, the update from Kantar, published on the afternoon of Tuesday 19 November, in follow-up to their previous polling report published on Wednesday 13th November, and summarised in their latest press release:

The effect of Brexit Party leader Nigel Farage surrendering to Conservative pressure – or alternatively, depending on your viewpoint, recognising electoral reality – by standing down Brexit Party candidates in all 317 currently Conservative seats, is clearly shown. But how come 43 per cent of respondents think that no party will get an overall majority, when, in the same sample and survey, the Conservatives have a polling lead of 18 per cent over Labour? Is that because of the possibility of inbuilt priors – the ‘house effects’ – corrupting in the statistical sense the methodology, as described earlier in the articles by Matt Singh and Anthony Wells? Who knows?

Possibly the most astutely prescient polling comment this past week, however, has come from polling guru Sir John Curtice; he observes that, with both the Conservative and Labour parties led by politicians disliked by most voters, this election is actually turning out to be an unpopularity contest. Presumably, our next Prime Minister will be the party leader who is least unpopular with voters.

For years we’ve mocked politicians who, in response to media challenge about adverse poll ratings, have robotically intoned the mantra “The only poll that matters is the one on [insert election date]”. Perhaps we should have given them a little more credit?

Thoroughly agree with this article? Vehemently disagree with it?

Scroll down to leave a comment